Minds in the Machine, Minds in the State – Joscha Bach and Anders Sandberg

A Conscious Cyborg Leviathan?

Minds in the Machine, Minds in the State: Joscha Bach and Anders Sandberg in conversation.

Are we building new minds, or new organs of civilisation – and what happens if the answer is both?

Joscha’s Future Day talk, The Machine Consciousness Hypothesis, asks what would make a computer system actually have experience, how we could tell (if we can), and what we learn about biological consciousness by trying to build artificial versions of it.

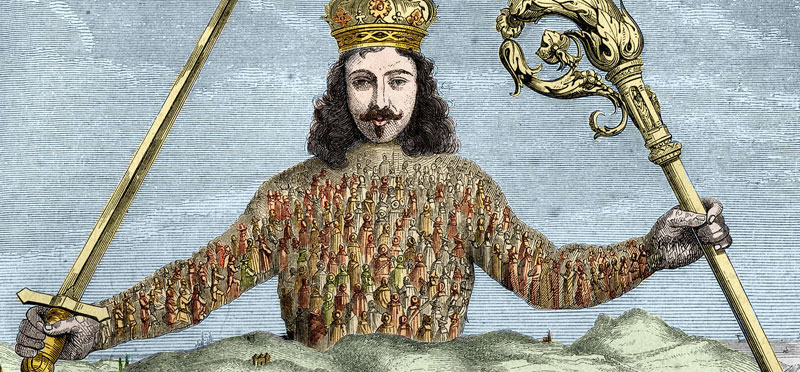

Anders’ talk, Living inside the cyborg leviathan, zooms out: humans cope with being limited, biased primates by building institutions and technologies that function as extended cognition and coordination machinery. Now we’re building AI that can slip inside those systems, potentially turning society into something like a literal cyborg.

On paper, these topics can look disparate: one is about consciousness; the other is about civilisation-scale coordination. In practice, they collide head-on the moment AI moves from tool we use to infrastructure we inhabit.

Video of discussion:

The bridge: mind-like infrastructure

The frame for this conversation is AI as mind-like infrastructure:

- Sometimes AI looks like a mind (planning, self-models, persistent memory, agency, maybe even experience).

- Sometimes it looks like an organ (a component within a larger system – companies, governments, markets, scientific institutions – that collectively behaves like an agent).

Perhaps the unsettling part is that you can get civilisation-scale agency without any consciousness at all. A non-conscious optimiser embedded in institutions can still reshape incentives, workflows, and power. If you’re waiting for sentience as the red alarm, you’re basically waiting for your smoke detector to develop feelings before you leave the building.

At the same time, if machine consciousness is even plausible, then alignment must be a moral problem too: we may be creating new moral patients, not just new tools.

The cyborg leviathan: can institutions think? Can they feel?

If the machine consciousness hypothesis rests on functional/computational assumptions (broadly, the idea that substrate isn’t the point – organisation of it is), then we need criteria that can be distinguished from it sounding human-ish. Where are the edges of consciousness likely to be? Is it tied to particular architectures or dynamics? Can we design tests that don’t just reward performative self-reporting?

Anders’ “cyborg leviathan” sits naturally beside the extended/distributed cognition tradition: the idea that minds can extend beyond skull and skin into tools, practices, and social systems.

If cognition can be distributed, can consciousness be distributed too?

And if it can, then who is thinking and feeling – the AI, the institution, the network, or some coupled system that doesn’t map onto our usual categories?

It’s tempting to say institutions aren’t conscious, so who cares. But even if institutions don’t feel, they can behave as if they have goals – because selection pressures favour goal-directed, self-preserving structures. Embed AI deeply enough and you may get emergent agent-like behaviour whether or not any component is conscious.

So the discussion will push on a crucial distinction:

- Non-conscious leviathan aspects: still transformative, useful, dangerous, and capable of capture.

- Conscious leviathan possibility: would be a new moral constituency – and not in the simple “borganism with a queen bee at the centre” way, but potentially as an emergent, distributed subject.

Second-order alignment: “plays well with society” is not optional

Most alignment talk is first-order: does the AI do what we ask, safely?

Anders’ focus may add a second-order alignment: does the AI play well with our institutions, incentives, and fragile coordination structures?

This matters because society already thinks in a distributed way. AI doesn’t merely act within institutions – it can change what institutions are by:

- accelerating decision cycles beyond human comprehension,

- reshaping incentives,

- automating enforcement,

- and making subtle governance changes that are hard to reverse.

A system doesn’t need consciousness to do this. It only needs optimisation pressure, access, and time.

Therefore, could a non-conscious system still be a civilisational agent with de facto goals?

If yes, then moral status and governance risk become two largely independent axes – and we can fail on either one.

The quiet catastrophe problem

If you’re imagining catastrophe as a dramatic “AI takes over” moment, you’re probably picturing the wrong failure mode.

A more realistic danger is the quiet catastrophe:

- Moral catastrophe: creating suffering (or creating entities capable of suffering) because we didn’t take consciousness seriously, or because we built the wrong kinds of training incentives and internal drives.

- Civilisational catastrophe: institutional capture — systems that make the world stable and “safe” in some narrow sense, yet unfit for human flourishing (sterile, paternalistic, or locked into bad proxies).

Or, depressingly, both at once.

We’ll ask:

- Which is more likely in practice?

- Which is more neglected by current research and policy?

- What does precaution look like when we’re uncertain about consciousness?

What Makes for a Cyborg civilisation done right?

This conversation is not meant to be doom-only. There’s a real, attractive vision on the table – a cyborg civilisation where AI enhances collective intelligence and coordination without erasing agency or good values – and where the leviathan becomes a better steward than it would be if it were an indifferent optimiser.

But that raises hard questions worth facing directly:

- What aspects of civilisation would it be immoral to make sentient? (If we can’t guarantee welfare, maybe don’t create sentient minds in the machinery that runs supply chains, policing, or warfare etc.)

- If we accidentally create a leviathan sentience, what obligations follow?

- What would good look like under uncertainty? (Not just technically, but politically and culturally.)