Are we fit for the future?

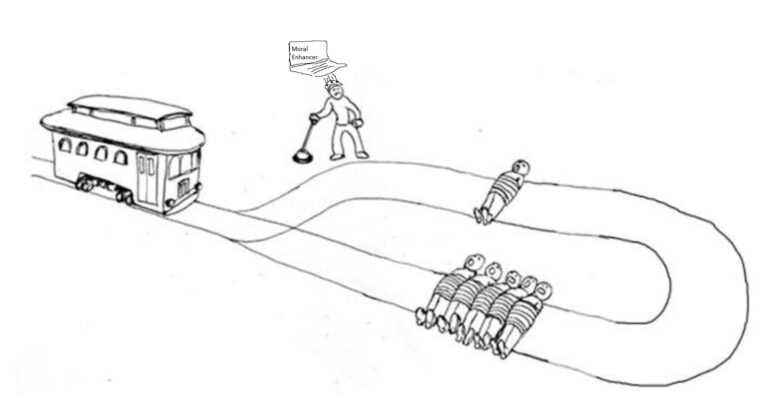

Panelists: James Hughes, PJ Manney (both at IEET), and Pramod Nayar (Hyderabad Uni) discuss humanities fitness for the future – covering important points including: Are we morally equipped to deal with humanities grand challenges? If the majority population of a democratic state were morally deficient, would it be okay to morally enhance the population, or…