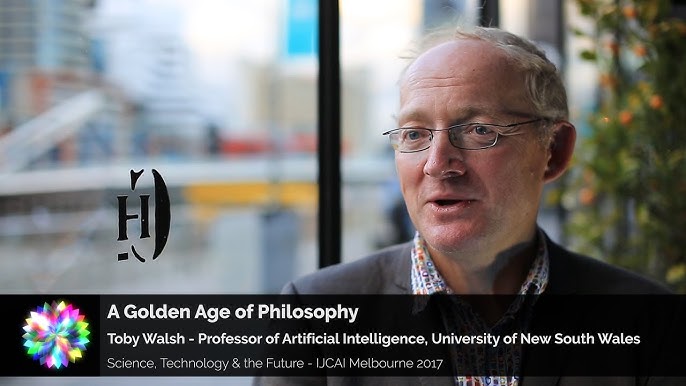

AI Ethics: A Golden Age of Philosophy – Toby Walsh

The Golden Age of Philosophy: Navigating the Ethics of AI with Toby Walsh

In an era where technology evolves faster than our social structures, artificial intelligence (AI) is pushing humanity toward a “Golden Age of Philosophy.” In a compelling interview, Toby Walsh, Professor of Artificial Intelligence at the University of New South Wales, explores why the rise of machines is less about “killer robots” and more about the fundamental questions of what we value as a society.

You can watch the full video here: AI Ethics: A Golden Age of Philosophy – Toby Walsh

A Mirror for Mankind

Walsh suggests that building machines that think is one of the most profound questions we can ask over the next century [00:52]. By creating intelligence in silicon, we are forced to confront a humbling possibility: that our own intelligence might not be as “special” as we once thought [01:03]. This technological mirror doesn’t just improve our quality of life; it challenges us to define what makes us human.

Why Philosophy is Making a Comeback

For decades, philosophy has often been sidelined by linguistics and abstract theory. However, AI is forcing the discipline back to center stage. Because machines require explicit programming, we can no longer rely on the vague, “common sense” moral decisions humans make in split seconds [02:10].

- The Trolley Problem in Reality: While often dismissed as a thought experiment, the trolley problem illustrates a core challenge: we must decide our moral values upfront before a machine ever hits the road [03:00].

- Super-Morality: Walsh argues that instead of just replicating fallible human morals, we should aim for “super-moral” machines that hold higher standards than our own often messy and inconsistent values [04:30].1

…all of the decisions that we start making in our society once we let machines make them, then we’re gonna have to be much more precise about what the values they should have. Even that’s already highlighted some of the challenges, and I said we want our values but “who is us?” and “how do we resolve the conflicts between different members of the society?” – and you may have different values than I have. So which values to the end up to be?

Then it’s really difficult, if not near impossible, to actually work out what our values are. We’re very poor at articulating them and we certainly can’t get them from just observing our actions because … we’re just human, and we’re very weak in certain aspects of our lives – we do things that we know are not reflecting our values, just because we don’t have the willpower not to do them.And then our morals change over time – our morals today are probably different than they were a decade ago. So how do we allow this moral drift to happen in our machines as well?

Toby Walsh – 2017-09-05 in interview with Adam Ford

I think there’s no reason why we shouldn’t be holding up the machines to higher moral as the ours – knowing that our morals are actually quite fallible and quite messy and dirty in places. We should be actually setting out with the goal of making sure our machines are actually super-moral as well as super-intelligent.

Challenging the Singularity

Is an “intelligence explosion” inevitable? Walsh dedicates a portion of his work to exploring why the Singularity might never happen [04:36]. He points out that, like the speed of light or quantum uncertainty, there may be fundamental limits to intelligence itself [05:43]. Just as a person can’t “out-think” a loaded roulette wheel, there are problems—like those in the Middle East—that intelligence alone may never be able to solve [06:27].

Near-Term Risks: Incompetence over Malevolence

While science fiction focuses on sentient, evil AI, Walsh is more concerned with “stupid AI.” The immediate danger isn’t malevolence, but incompetence—giving life-or-death decision-making power to machines that aren’t capable of handling it responsibly [10:26].

Other pressing concerns include:

- Technological Unemployment: While technology creates new jobs, there is no guarantee it will replace all the ones it destroys [11:08].

- Algorithmic Discrimination: We must be careful not to hand over decisions regarding justice and incarceration to biased algorithms, as these choices sit at the heart of our humanity [13:02].

The Future is a Choice

Perhaps the most empowering takeaway from Walsh is that the future is not a fixed destination we are drifting toward. Instead, the future is the product of the choices we make today [14:03]. Just as societies made deliberate choices about how to regulate television decades ago, we are currently making the choices that will define our relationship with AI for the next century [15:48].

As we move forward, we have the opportunity to let robots handle the “drudgery” while we focus on the things we truly value [11:40]. The question is: are we ready to do the philosophical work to get there?

Follow Toby Walsh’s insights by watching the full interview and checking out his book, Android Dreams.

- Supermorality – This is very similar to my views that AI could one day become more moral than humans. ↩︎